Today Talis announced they are moving their focus away from Linked Data. I think my initial reaction, which I tweeted, holds true: this is on similar lines to Microsoft announcing plans to move away from Windows.

Earlier this year I was at an event where I bumped in to two people from Talis. The one who knew me introduced me to the other, I think he described me as ‘a long time Talis watcher’, whether or not I quoted him accurately, that statement is probably true, and I therefore want to muse a little. Forgive me (especially if you are involved).

Here are my thoughts in a personal capacity. I start with some history, it goes on a bit, feel free to skip past it. At the end I move on to today’s announcement.

Some History, it goes on a bit

First, I want to go back a few years. I had started working at Sussex in late 2002 and this was my first time using/running the Talis Library Management System. Talis’ history is fairly well-known: Started in the late sixties as Birmingham Libraries Cooperative Mechanisation Project (BLCMP) as a co-operative shared service project between Birmingham Libraries, it developed over the years to provide what became known as a Library Management Systems (or Integrated Library System) and other libraries – both public and academic – joined the co-operative (i.e. customer owned), the system being known as Talis. Around 2000 (which was around when Sussex migrated to Talis), the company changed its name to match the name of its core product – Talis – and (I think) at this point became employee owned. I should add, for those new to such things, that in the Academic Library Management System (loans, fines, buying books, cataloguing, etc) market there were probably around six main players in the UK. All but Talis were international companies with many have well over a 1,000 customers. Talis had roughly 100 customers (half public, half academic). In terms of revenue, in simple terms, this meant a tenth of what others had to spend, I was always very aware of this and impressed in the way Talis kept up with the competition.

When I started at Sussex the system here had only been live a year, the server was a bit of a mess from a System Administration point of view. There seemed to be little structure to where components lived on the system, and many components seem to have several versions installed, yet often an older version seemed to be the one running. The top-level of the system had lots of files with silly names such as “ “ or “~”. The previous administrator was new to Unix/Solaris and to be blunt, had taken to it like a duck to sulphuric acid. My frustration here was mostly I had no idea what was the result of specific requirements of Talis and what was the whims of previous Sussex staff; what was essential to the running of the system (no matter how unusual) and what needed to be cleaned up. The crontab for root had about 1,000 lines, and I wont even start describing the printing system.

One of these frustrations was the web catalogue. For a start it was running the CERN HTTPD (netcraft pretty much confirmed you could have fitted all the servers still running CERN httpd in to classic Mini). Ironically the first thing I had done at my previous job was migrate the web catalogue to Apache. It is usual with third party systems to have clear documentation as to which web server /version is supported (or even it is installed with the application itself with no choice or involvement by the customer, as was the case with Prism2). It took a while to find out if we could move to Apache (perhaps CERN was the only thing they supported), and which version we should move it, and if there was guidance configuring Apache to serve the web application. Oh, and the web application seemed to live in about five different duplicate locations on the file system (Grrr). Like a lot of the web at the time, it used frames, and images for the menu text.

In 2003 Talis released Prism their next generation web catalogue. It ran on a separate server to the main Talis system. Based on the information we gave them they recommended two (entry level) Dell servers. These were essentially shipped to Talis where they were fully configured, we just had plug them in. One slight annoyance was that it was a master/slave configuration, with the master passing sessions to the slave to load balance. If the master dies, the whole service died.

Prism was a great leap forward, it looked like a modern web application, and I was quite pleased to see it was a java-based applocation (the ugly URLs are always a give-away). We had our severs up and running by summer 2003, but did not make it our default catalogue until summer 2004. Prism was not perfect, it timed out after a period of inactivity (nothing worse than leaving a record on screen for the book you must have, only to come back to a ‘session has timed out’ message), and had no relevance ranking. One example was for searching for books about the web, books starting with the word web would come near the end (‘w’), with lots of unrelated (but matching the search) books coming before. You can see an example here (this uses Manchester Metropolitan University, we have recently shutdown ours).

What made me happy was that Talis saw the release of Prism 1 (and 1.1) as just the very first steps in a long line of developments. I attended the Talis User Symposium 2003 where this was discussed. As a technical aside, I think it was at this event that I asked if the Linux boxes were to be treated as ‘black boxes’ what would happen regarding security updates, OS upgrades etc. I was told that as they were such a minimal install, and so locked down (they’re public web servers!) this would not be required. I’ve just checked, the last of these boxes will soon be decommissioned here, ‘uname –a’ shows “Red Hat Linux release 8.0 (Psyche)” (not to be confused with Redhat Enterprise Linux or anything modern like that) – we are running a system released in 2002, which we were told we shouldn’t be patching.

If I’m being open about Talis, then I should be open about something else. Its customers. I had been involved with a couple of other library systems. Customers were often frustrated with the slow pace of developments and poor service, but meetings and conferences were always professional and diplomatic exchanges, with both sides understanding the realities of the other. As such I had never witnessed such negativity and moaning, especial aimed at new developments. Each new product or version was seen by many customers purely in the negative. We’ll have to update our (overly complex and probably unneeded) user guide, it probably wont work, it will probably have bugs, it wont be useful to our users, what’s wrong with the old version. At conferences, on mailing lists, in meetings, the (vocal) majority were against anything new, and unable to see that while the new may not be perfect, it was considerably better than what was before and probably even the competition. (I should add this is some years a go, and much has changed in the last 10 years, nor due to my current job have I attended any meeting about the LMS for at least seven years).

In November 2004 two people came down from Talis to talk to a few of us at Sussex. As part of this we had a conversation about Prism: where it was going in the future. This mainly involved us saying ‘wouldn’t it be brilliant if Prism did X’ and them saying ‘That’s great, we’re so pleased you’re saying that, the next version can do that, it’s going to be available any day now’. That release never really came. A fix (Prism 1.2 I think) came out early 2005 for a bug some users were having (this started out as a ‘only upgrade if you have this bug’ but at some point became ‘why haven’t you upgraded to the latest version yet’). Prism 1.3 was released in August 2005, and in 2006 Prism 2 was released. This did have new features: it worked with MARC21 (a newer version of a common – and dire – bibliographic standard), worked with 13 digit ISBNs and worked with Unicode. While good stuff, hardly features that users would really notice.

A classic example of requested functionality was the ability of export by Endnote. A method to do this did appear on the Talis Developer Network (TDN) and a senior member of staff at my Library emailed me to check-up that I was fully on top of such things. Only… it was written by me. I had created a filter for Endnote which allows you to cut and paste the output of Prism in to a text file and then use the filter to correctly import the file in to your Endnote library (it matched the Text displayed next to the record details in Prism with the matching fields in Endnote). I had documented this on the Sussex website, and Talis, with my permission, has adapted it as a guide. I confess, I felt smug.

Around the same sort of time Talis Graphical was being developed. Talis Graphical was a Windows GUI to the Talis system, until now, all staff activity was via a Unix application accessed via a Telnet or SSH client. Like all unix applications that use function keys (hello Ingres Client) Term Types and keyboard mappings were a bit of a dark art. I liked Graphical a lot and give Talis a lot of credit for it, especially compared with some of the other LMS systems Windows applications, which were amazingly bad. I was also impressed that all keyboard functionality was consistent between the traditional unix application (now called Talis Text) and the new Windows client.

Talis Graphical became Talis Alto on its release. A few years later the phase Talis Alto started to be used in some places as a term to encapsulate the entire LMS, and gradually this became the norm. A minor thing, but I wished such changes in terminology had been announced, the message – when it was queried – was that the client was the LMS and hence Alto had always been the new name of the LMS to distinguish it from the company (named, if you remember, after it).

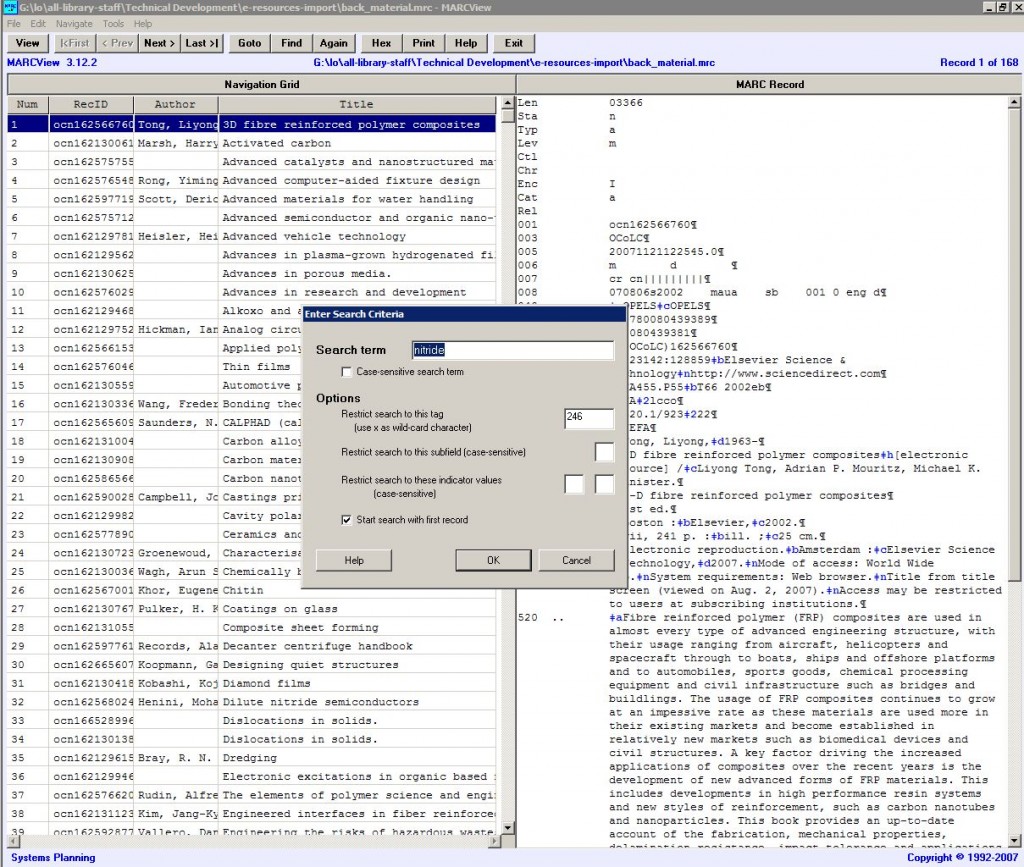

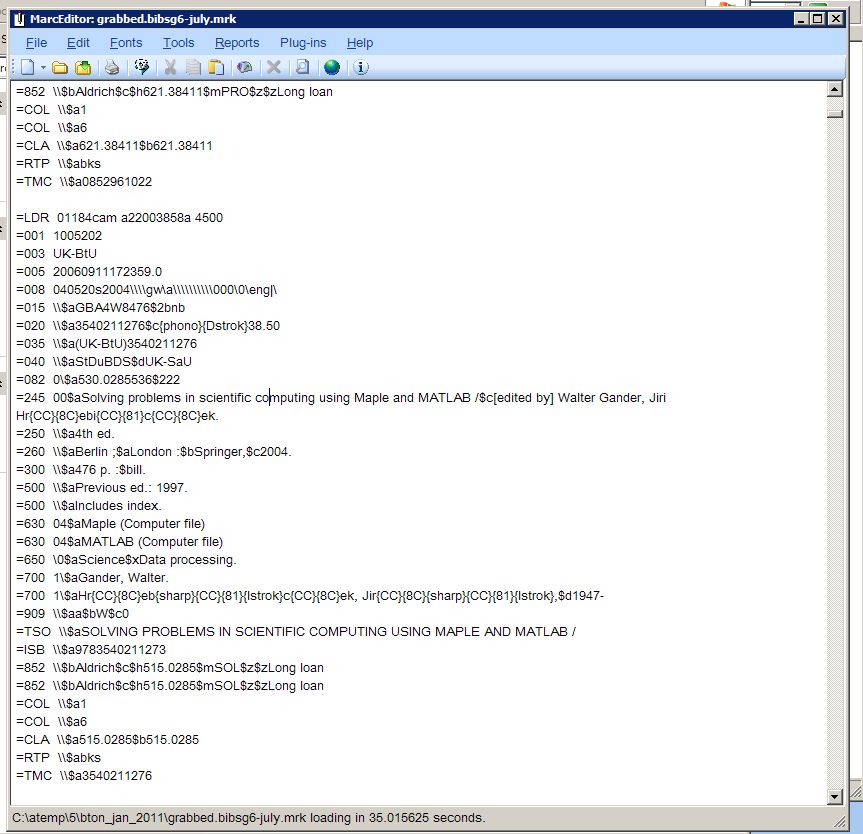

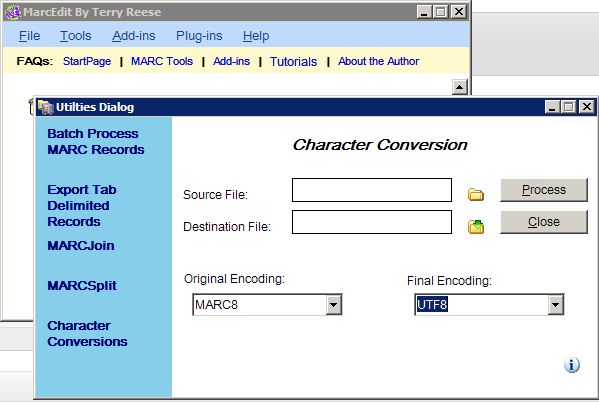

The Talis Alto LMS was(is) a good system. It had its pros and cons. It used a relational database AND actually used it as a relational database (in the Library system world this double is rare) and as a company they were open and approachable. At the same time their documentation was poor and trying to find out what documentation was current, and likewise what the latest release of a product was a pain. A one point after an update our ‘scratch’ area on our system kept filling up and on inspection a good number of very large files were there. After more work than it should have been it turned out the latest release had a new indexing system, half the files were the (essential) indexes and half were meaningless log files from the indexing operations. All stored within scratch and no notice to set up jobs to plan for these large files, or rotate the log files. Elsewhere, it felt like half the system ran by a series of slightly confusing Perl scripts which it was never fully clear what did what. It felt like it was painful for the system to adapt to a MARC21 world, and where bibliographic records and imported, bulk changed, and dropped with regularity (mainly for online content).

In 2005/06 Talis started to take on a new lease of life and confidence. Talis Insight 2005 has a list of high profile speakers from the Technology and Library worlds. A number of new initiatives and projects were started (see some of them at the bottom of the forums). The Talis Developer Network was started. Linked Data was being talked of, Panlibus the blog started (so did many other blogs), Panlibus the magazine did too. And then there’s Talking with Talis and The Library 2.0 Web Gang. There was a Library mashup competition, and the first mentions of the Talis Platform. Above all, Talis had recruited many new staff and it was clear from the blogs and concepts such as the TDN that they had some very smart people who understood the web and how to do things properly. This gave me confidence in the applications I could expect to see in the future.

One of the early things I liked was Richard Wallis’ Talis Whisper Project, a ‘catalogue’ which also showed the price of the item on the right as you moved over search results, using the Amazon API, had a basic search auto-complete and could show you via a Google Map which Library had the item. In terms of look and feel, and these features, this was light years ahead of what we currently had. Soon after, we had Project Cenote – another library catalogue demo, built on top of the new Talis Platform. It was quick to build and showed the power of building applications on top of the new Platform thingy.

Talking of the Platform, this was something that was everywhere, on the library (Panlibus) blog, the TDN, on the new more technical blogs now being setup and had its own newsletter. In 2007 some clueless wanna-be-developer tried to go through all these references to the Talis Platform and BigFoot and work out what these things were. The results were embarrassing.

Talis were certainly raising their profile: nationally and internationally, and beyond the traditional library market. Their call for Open Data, Apis, mashups and open standards struck with me and gave hope for systems working together, especially (as we were Talis customers) systems we were paying for. They were pushing for the things I wanted. And they were telling much large players in the international library market how to do things right, while talking about cutting edge stuff at the www conference. And as we rolled in to 2008, I got on to Twitter, where Talis staff were active, open and doing interesting stuff.

A few things troubled me. First I loved the Whisper catalogue demo, and waited, and then loved the Cenote catalogue demo. And I knew they were quick demos, and I knew they didn’t have half of the needed catalogue functionality in the real world, and I knew real products need testing, and I knew that they need to be built for exceptions, and for load. But… but… as I looked at Prism, the catalogue that was going to see regular updates and lots of new features from the day it was released in 2003 – but didn’t – I couldn’t help thinking, how long would it take to get just some of this stuff in to Prism the product, Prism that we pay for? These demos did not time out, had a modern web look, nice URLs, worked on OS X(!). The ideas, projects, research areas, mashups and demos kept on coming on the blogs but when was this going to filter in to, well, you know, the products?

There seemed to be a separation between Talis, which was producing some cutting edge thinking and ideas, and Talis the Library systems vendor, who – like many LMS providers – had a product that was looking somewhat tired, and not exactly technologically cutting edge (though I imagine the small team working on it had much better things to be doing). This separation formalised as Talis showed a clear structure between it’s Library application side and the Talis Platform side. You can see this clearly in this March 2007 Talis homepage and the May 2007 homepage. Would the ideas of web technology, standards and openness filter from one to the other?

And there was something else bothering me: the business plan. The Talis Platform had been in public for sometime, clearly a lot of development had gone in to it, and a lot of documentation, guides, web casts, conference talks and more (all costing time and money). The same too regarding modern library technology, with talks around the world, and world-wide Talking with Talis / Library 2.0 Gang podcasts and mashup competitions. What was the aim? Their Library market was the UK and Ireland, and I could see the Platform had a potentially global market, but what was the plan regarding Libraries? Were they going global there too? If not, why the push to market themselves (via talks and competitions) globally specifically in the library sector, why not focus on the markets they plan to sell in and leave the global stuff to those advocating the global Talis Platform? How did a mainly US based Library Gang help them with a fairly conservative (and often cynical of technology) UK library customer base? Meanwhile, while it was understandable in the early days of the Platform that they were trying to build momentum and mind share, when were they going to sell something? Click here to buy Platform Space…. or Contact us to do a big enterprise deal. The website seemed short on calls to action. All this work, while good for the community, and great for someone like me, cost money and resources from a small company that I wasn’t convinced could afford to essentially pay for such broad community building, in the hope they would get some of the eventual business.

Talis had grown many tentacles, and has clearly made a name in the linked data and library technology sectors, but hard revenue on the back of this seems slow. And this is from a customer (normally customers are crying out for companies to spend less time worrying about the bottom line and more on cutting edge stuff!).

In 2007 ‘Prism 3’, a new version of the catalogue which would be hosted, was much trumpeted by Talis; at account meetings and elsewhere, ready for release by the end of the year. It would be built on the Talis Platform, and all my concerns about ‘the talk’ not going in to ‘the walk’ would be gone.

This was good not just because I wanted to see some of the cool web stuff in our catalogue, but because of something more important. The National Student Survey. For those outside of UK HE, the NSS is big, and doing well in it is important. Essentially, finalists fill out a survey of questions about their experience while at University (rate your feedback from assignments from 1-5), one of which is about the Library. Like most Universities, Units such as the Library at Sussex produced a plan as to how they would increase student satisfaction (and hence the NSS score), and while certainly not a core issue, the catalogue had been noted as a weakness and we had therefore committed to improving it. It was a concern therefore when the end of 2007 came and went, and in early 2008, while we could see early demos of the new product (then ‘Project nugget’), it was now clear it would not be ready for the summer, when Universities traditionally launch new services.

Instead we went for a relatively new (but actually ready to use) product called Aquabrowser (implemented by the likes of Harvard, Cambridge, Edinburgh and Chicago) which could import MARC21 records from any system and act like a catalogue (well it could, if you ignore the need to log in and renew books and place reservations, which as it happens is quite a big need). We hit a very common problem in the Library technology world, and one I rant about at every opportunity (including this one): while MARC21 may be a bad standard, it is, at least, a standard, and it is, at least, used by most library systems for bibliographic information. The other type of information essential for a library catalogue is Holdings and availability information, i.e. for each item, the shelfmark and if it is on loan. This is simple information, and a common standard could be developed in an afternoon in a pub on an envelope and yet one does not exist. Instead, Aquabrowser screen scraped the Prism 2 record page for the item it wanted to show details for (all geeks will dance with joy at this wonderful solution). Active development in Aquabrowser seemed to end the day we signed up for it.

A quick note about another of Talis’ products: TalisList. TalisList was a reading list system and the unloved ugly duckling. At one event, which a colleague was at, a senior person at Talis said ‘with the new Talis Platform it’s just going to take a few months of Agile Scrums to quickly build the new TalisList application, that’s why this stuff is so good’. This follows the given wisdom in IT that to accurately predict development time you should take the time given in months and convert the units to years. And then add a couple of more as well. At the end of it, we had Aspire, of which we were the second customer.

2011

In March 2011 Talis announced the sale of the Library division to Capita. This was big news, and I confess, quite shocking, I hadn’t seen it coming. In a sense it quickly made sense, Talis was more and more acting like two companies under the same roof. One provided a traditional library system and made money from support, consulting and extra services. The other had the Talis Platform and a number of exciting developments around it, plus the Education side, built on top of the platform, which consisted of Aspire.

Talis has made a big statement, they had sold the Unit which they were named after, which was their history and main source of revenue. It showed a coming of age of the Linked Data work that had been taking place, and a confidence that there was business there to be made. The sale of the Library division gave them cash to get going and allowed them to be lean and focused.

The Library Systems market had matured, most libraries had a system they were happy with, the cost of changing systems (training, integration with other existing systems) was high. While there was on-going income from service contracts and new developments this was not a good fit for where Talis wanted to be, for a company like Capita there must be additional benefits of offering such a system as part of a larger suite of products to their public sector customers.

The split was, from the outside, a clean one, the only slight grey areas were the Web Catalogue (Prism3) on the Library side being built on top of the Talis Platform now on the Talis side (but then, Talis were always keen to host other companies data), meanwhile many customers of the Library System (Alto) now owned by Captia, were also customers of Aspire, which remained with Talis. Any benefits for customers of having two products from the same supplier were gone.

Talis continued as three Units; Talis Education (Aspire), Kasabi (a data store and related services) and Talis Systems, who provided Consulting. Presumably the developers and System Administrators who managed the actual Platform fitted under the latter.

2012

And now we get to the point of this post. All that above, really just a very long comment in parenthesis to set the context of where I am coming from. I’ve been following Talis for a long time, partly because it is what I should do when they are our main system supplier, and partly because I was interested in their direction. And as my job moved more in to innovation and new developments, again, this used useful for work (as one example, by following Talis, I grew interested in Linked Data, which in turn led me to the idea for SALDA, which became a JISC project).

Today, 9th July 2012 Talis announced a move away from Linked Data and shutting down of Kasabi.

But there is a limit to how much one small organisation can achieve. In our view, the commercial realities for Linked Data technologies and skills whilst growing is still doing so at a very slow rate, too slow for us to sustain our current levels of investment.

We have therefore made the decision to cease any further activities in relation to the generic semantic web and to focus our efforts on investing in our growing Education business.

Effective immediately we are ceasing further consulting work and winding down Kasabi. We have already spoken to existing customers of our managed services and, where necessary, are working with them on transition plans.

You can see some extra thoughts here.

There are a lot of talented people at Talis, and this must be a sad day for both current former staff. This has been their passion, and endless code, documentation, talks, presentations, plans and meetings have gone in to building what they currently have. To think it could all be turned off must be an incredible blow.

While working in my cosy public sector job, I admire those who take risks. I follow the start-up scene in the US and closer to home, and in my own small way try encourage those who work in Universities to try new ideas and be less afraid of risk (one example being blogging about a key commercial supplier in a way that might damage a client/customer relationship). Those who never fall never take big steps.

And Talis did take a massive risk. Credit to them. It sold, let us be blunt, the cash cow which must have brought in the vast majority of revenue. Yes this created a large pile of one-off cash, but this would not last, and they now had a set time limit for the Linked Data work to prove itself. Like any company, they would have planned this carefully, trying to predict growth and take-up of their services and plan for profitability.

Which perhaps makes today’s announcement even more surprising. No one could predict how long the economic downturn would continue for, or how much the Tories would cut back on new public sector IT projects, or how ideas like Kasabi would go down. But my basic calculations as to how far several million pounds from the sale, plus consulting fees, plus Aspire fees would go, did not leave me thinking that the crunch point would be now. Of course no company waits to their last pound before taking appropriate action when out goings are more than income but I’m amazed that just 16 months after confidently saying Linked Data is the future for Talis, we now hear the opposite is true.

Two out of three divisions are to close, the third looks like it will be re-engineered to move away from Linked Data. (Ironically, in this blog’s drafts folder is a recent attempt at playing with the Aspire API and being ever so slightly frustrated – while acknowledging my limited experience – about how the linked data concept can result in having to make many calls to the API for what one can get in just one API call using the laughably uncool CSV format).

There were some signs, in the last six months a number of key people have left, and Aspire Development has made mention of a new infrastructure and platform. I did find talk of this odd when so much of Aspire’s infrastructure is The Platform so removing the need to worry about it, so I guessed one interpretation of moving to a new platform involved moving away from the Linked Data based Talis Platform.

And what about The Platform? It really is the thing that has been there from the start, for many years you could see the release notes for the latest monthly upgrade to the Platform’s software on a wiki, it was constantly being improved. The announcement does not explicitly say what will happen with the Platform. In the short term, not a lot, Aspire and Prism 3 are built on it, and I have no idea if other third parties are using it (plus, disclosure, we have some data on there).

Perhaps the Platform on it’s own could be profitable? And if companies don’t like the idea of hosting their data on someone else’s servers, can the Platform be bundled up and sold as a standalone product to be deployed internally by large enterprises? I guess probably not, especially if it is built with other third-party components.

I admire Talis’ steps to create a new company in a new area in uncharted water, but wonder if there is anything to be learned from this, could more have been done to test the market and accurately plot take-up, demand, and the best range of products/services before taking the jump?

Aspire (a University Reading list system) has good take up in the UK, and even some internationally customers. And while breaking in to new countries is hard – and every country has a different HE setup (especially the US) – Aspire is a fairly unique product which might have many potential customers in countries that run Universities similarly to the UK. There are also complimentary developments which may interest Universities (though with Moodle being free and Open Source, moving towards the VLE/e-learning-like functionality would be difficult).

I don’t normally publicly dissect the decisions and history of a business, nor my frustrations and experience with a commercial system in such detail (though when I have here, it is mainly from at least several years a go). I hope I haven’t upset anyone – or at least not too many people. Since I joined Sussex, nearly ten years a go, Talis has reinvented itself several times, I’ve enjoyed trying to second guess its next move.

But I end with this. Never am I reminded so much that I am a creature with a small brain, of at best average intellect and shocking poor ability to grasp what should be basic concepts than when I read the blogs of those who work, or have worked, at Talis. Time and again I am blown away by smart thought, insight, comment and ideas. I wish Talis the best in its new direction (so long as that direction involves making Aspire bloody awesome… I’m still a customer).